On Aug. 26, 2016, mainstream fact checker PolitiFact published a spectacularly awful examination of Democratic presidential candidate Hillary Rodham Clinton’s claim that teachers were reporting a “Trump Effect” in schools involving an increase in harassment and bullying of some minority groups. PolitiFact relied for its evidence on an extremely flawed survey from the left-leaning Southern Poverty Law Center. PolitiFact also dubiously claimed that the bullying experts it cited felt Clinton’s claim was supported.

On Aug. 26, 2016, mainstream fact checker PolitiFact published a spectacularly awful examination of Democratic presidential candidate Hillary Rodham Clinton’s claim that teachers were reporting a “Trump Effect” in schools involving an increase in harassment and bullying of some minority groups. PolitiFact relied for its evidence on an extremely flawed survey from the left-leaning Southern Poverty Law Center. PolitiFact also dubiously claimed that the bullying experts it cited felt Clinton’s claim was supported.

Clinton received a “Mostly True” rating for her claim.

Here is the statement from Clinton PolitiFact said it was fact-checking:

“Parents and teachers are already worrying about what they call the ‘Trump Effect.’ They report that bullying and harassment are on the rise in our schools, especially targeting students of color, Muslims, and immigrants.”

PolitiFact’s fact check contains a staggering number of mistakes.

- It forgives Clinton’s ambiguity about who identified the “Trump Effect”

- It conflates bullying and harassment with other behaviors in defining the “Trump Effect”

- It ignores the absence of scientific evidence for rising levels of harassment and bullying

- It ignores the absence of evidence for targeted harassment of “students of color, Muslims, and immigrants”

- It fails to report the full extent of the problems with the SPLC survey

- It falsely says the survey’s “findings correspond directly with Clinton’s claim”

- It uncritically repeats a misleading statistic from the SPLC survey report

- It fails to list as a source the document containing survey comments, suggesting …

- It failed to look at the survey comments to judge whether they supported the report summary

- It falsely describes survey responses mentioning Trump as “unsolicited”

- It fails to criticize Clinton over the failure to show Trump as a cause of increased bullying or harassment

- It tries to support its findings with vague expert testimony without explaining any would-be evidence supporting the expert opinion

- Its rating of Clinton’s claim fails to even significantly reflect the problems acknowledged in the fact check

- It follows a different set of standards than PolitiFact followed for a parallel claim from Donald Trump

We will review the meaning of the SPLC survey, then support each point in order.

“The Trump Effect: The impact of the presidential campaign on our nation’s schools”

The SPLC’s survey report carries common earmarks of agenda-driven research. The survey makes no pretense of objectivity. Note the report’s description of how it obtained its survey sample:

A link to the survey was sent to educators who subscribe to the Teaching Tolerance newsletter and also shared on Teaching Tolerance social media sites. It was open to any educator who wanted to participate. Several other groups, including Facing History and Ourselves and Teaching for Change, also shared the survey link with their social media audiences.

Click here for an example of how Teaching Tolerance framed its survey presentation.

The population taking the survey came primarily from a sub-population of teachers who subscribe to a left-leaning newsletter. Members of that already left-skewed sample would self-select their participation in the survey. PolitiFact noted that such self-selection would tend to encourage the most-interested persons to take part. The sample, then, was about as far as can be from the simulated randomness expected of a scientific survey.

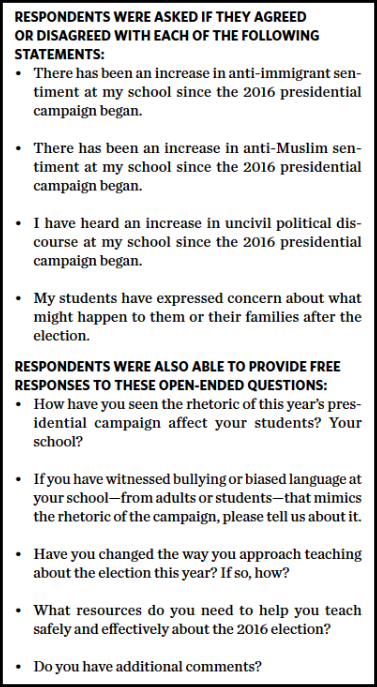

Issues addressed by the survey

The questions asked in a survey dictate the issues the survey may address. The SPLC survey asked four agree/disagree questions followed by a set of five open-ended questions. We show all ten question in Figure A to the right. The questions allowed the survey to address the following general questions:

A. Whether individuals surveyed believed anti-immigrant sentiment has increased at their school since the start of the 2016 presidential election

B. Whether individuals surveyed believed anti-Muslim sentiment had increased at their school since the start of the 2016 presidential election

C. Whether individuals surveyed believed uncivil political discourse had increased at their school since the start of the 2016 presidential election

D. Whether individual surveyed had heard students express concern over what might happen after the election.

The four agree/disagree question would allow the survey to address the four questions above, though based on a biased sample. The five open-ended questions would give anecdotal material that might relate to the four issues identified.

We would draw the reader’s attention to the fact that none of the survey questions addresses bullying and harassment as such. Even if the sample was randomized, these questions could not produce data reasonably supporting the idea that harassment and bullying are on the increase. That includes the second question from the open-ended list, for it mixes “biased language” with bullying and provides no baseline for comparison.

The report’s executive summary even shies away from defining the “Trump Effect” or mentioning any specific impact the Republican presidential candidate might have had on the behavior of students or teachers. Indeed, fear of deportation reported to teachers represents perhaps the most notable finding of the report, partly notable since blacks and legal immigrants seemed to share that same fear. If Trump has not called for deporting blacks and legal immigrants, we do not follow how Trump would reasonably draw blame for that mistaken belief. Calling it the “Trump Effect” seems like dramatic license at best.

Clinton’s various claims

Clinton started by saying “”Parents and teachers are already worrying about what they call the ‘Trump Effect.’” In her next sentence Clinton provides some context about the nature of the so-called “Trump Effect,” but until we get to that context we have only one claim worth fact-checking: Who is “they”? Parents and teachers? Or some other entity?

As noted above, Clinton’s next sentence starts to describe the “Trump Effect”: “They report that bullying and harassment are on the rise in our schools.” The sentence does not end there, but we’re stopping to note the specific claim. Clinton tells her audience “they” report bullying and harassment are on the rise in our schools. Clinton refines the claim as the sentence continues.

Clinton, continuing her sentence, says “they” report increasing bullying and harassment focused on particular groups: “Especially targeting students of color, Muslims, and immigrants.”

So, Clinton claims 1) Parents and teachers are worrying about a “Trump Effect” defined as 2) increasing bullying and harassment in our schools and 3) focused on “students of color, Muslims, and immigrants.” The third claim we could count as three claims, for a total of five, if we wished.

PolitiFact’s fact-checking blunders

Forgiving Clinton’s ambiguity about who identified the “Trump Effect”

Clinton’s statement suggests that parents and teachers were calling the focus of their worry the “Trump Effect.” Some of the comments recorded from the survey blame Trump for various things, such as giving students an excuse to use crude language, but the creators of the survey apparently cooked up the term “Trump Effect,” not parents and teachers. So “they” is the Southern Poverty Law Center’s Teaching Tolerance group. PolitiFact does not mention this misleading aspect of Clinton’s claim in its fact check. PolitiFact later compounds its error, as we explain below.

Conflating bullying and harassment with other behaviors in defining the “Trump Effect”

While Clinton defined the “Trump Effect” in terms of bullying and harassment, the SPLC report did no such thing. The report measured, poorly, anti-immigrant sentiment and anti-Muslim sentiment since the start of the 2016 election. And it collected anecdotes about bullying or “biased language” associated with election rhetoric.

PolitiFact apparently adapted to Clinton’s needs in defining the “Trump Effect.”

Ignoring the absence of scientific evidence for rising levels of harassment and bullying

While PolitiFact spends a few words admitting to various flaws with the SPLC survey, the “Mostly True” rating belies the facts. The survey literally provides zero evidence of rising levels of harassment and bullying. As noted in our assessment of the survey, even using a randomized sample of teachers would not fix the problem because the survey does not ask the right questions. It simply does not bother to measure any increase of harassment and bullying. At most, it asks teachers for examples of bullying related to election rhetoric. The few resulting anecdotes do not make a case for any increase, as they make no measurement from a baseline.

Ignoring the absence of evidence for targeted bullying and harassment of “students of color, Muslims, and immigrants”

To make a finding of targeted harassment, the SPLC survey would have had to first measure changing levels of bullying and harassment. It did not do that. Nor did it offer any baseline for measuring how “students of color,” Muslims, or immigrants compared to other groups in terms of experiencing bullying and harassment. Even if we pretended that “anti-immigrant sentiment” was “anti-immigrant bullying and harassment” it would not follow that immigrants were targeted for bullying and harassment because the survey provides no baseline for comparison. To discover targeting, one would need to have figures showing the targeted group received more than its share compared to the baseline group. The SPLC survey provides no baseline group. Simply noting an increase for one group ignores the fact that a baseline group might have experienced an even greater increase.

Failing to report the full extent of the problems with the SPLC survey

While PolitiFact dutifully noted the problems that the SPLC survey report confessed about itself, it ignored the way the SPLC skewed its sample toward those who subscribe to the Teaching Tolerance newsletter. PolitiFact merely noted that the sample was self-selected. While self-selected participation in a survey is a notable problem, it’s an even bigger problem to allow an already non-representative group to self-select its participation.

Falsely saying the survey’s “findings correspond directly with Clinton’s claim”

PolitiFact not only fails to notice the difference we describe between the survey’s findings and Clinton’s claim, it flatly tells its readers there is essentially no difference:

While their findings correspond directly with Clinton’s claim, it’s important to note that this was not a scientific survey, as the report notes.

In PolitiFact’s defense, it relied on a quotation from the SPLC report that makes it seem the report’s findings correspond with Clinton’s claim:

The center conducted an online survey of approximately 2,000 K-12 teachers and found: “Teachers have noted an increase in bullying, harassment and intimidation of students whose races, religions or nationalities have been the verbal targets of candidates on the campaign trail.”

The problem? As we documented above, the SPLC report did not ask any questions that would allow it to conclude that teachers have noted the claimed increase in harassment or bullying, etc. The only respect in which the report supports that claim would come from individual teachers making the claim via anecdote. Notably, PolitiFact produced no examples of that.

The report’s findings only correspond directly with Clinton’s claim if we pretend that an increase in anti-immigrant and anti-Muslim sentiment since the start of the 2016 election is the same thing as increasing bullying and harassment targeting “people of color,” Muslims, and immigrants. The survey’s findings would be unscientific even if they corresponded with Clinton’s claim. But the findings do not correspond. They are effectively worthless in supporting Clinton’s claims.

Uncritically repeating a misleading statistic from the SPLC survey report

The SPLC report touts the fact that its roughly 2,000 respondents recorded over 5,000 comments, and of those 5,000 comments roughly one in five mentioned Trump. PolitiFact put the statistic at the head of its list of “interesting details” from the survey.

Why is that significant? Presumably because it means that about 1,000 responses connected Trump to harassment or bullying.

Except that’s not the case at all.

The one-in-five statistic is meaningless. The survey asked five open-ended questions. Only one of them, question No. 2, dealt with bullying. That is the only question that should draw our focus if we’re interested in the election’s effect on bullying. We counted a total of 123 answers to question No. 2 that mentioned Trump, and each of the nearly 2,000 respondents had the opportunity to answer. A great many of the answers to question No. 2 did not associate Trump with bullying in any way. For example, some responses said the teacher had not witnessed any bullying, but would go on to mention Trump. Or the response would mention Trump in the context of the other part of the question, asking about “biased language.”

The website linked in the preceding paragraph allows users to search the responses by question and keyword. Our findings are easy to verify, and we compiled the results in a Google Drive document in case that website should ever disappear. We encourage readers to look at the Trump mentions. The deeper the look, the more clear the conclusion that the SPLC survey was partly an exercise in deception. And it worked on PolitiFact.

Those familiar with the data and willing to push the “one in five” statistic discredit themselves.

Failing to list as a source the document containing survey comments

Was the PolitiFact team of Lauren Carroll and Aaron Sharockman unfamiliar with the nature of the roughly 1,000 comments mentioning Trump?

Here’s what we know: One cannot judge the significance of the “one-in-five” statistic without knowing the way respondents used “Trump” in their answers. No fact checker worthy of the name should have allowed the opportunity to slip by. The comments were published in a document separate from the main report, and PolitiFact did not link to that separate document. That, by itself, is a significant oversight. It is such a significant oversight that it segues to our next point.

Failing to look at the survey comments to judge whether they supported the report summary

One look at the list of five open-ended questions asked of respondents should have waved a massive red flag in front of a fact checker, signaling that the “one-in-five” statistic was deceptive window dressing. It should have drawn attention immediately to the separate document containing the answers to the questions. Looking at that document should have made obvious the deceit behind the one-in-five statistic. The stat may well be true, but it says absolutely nothing about Trump’s role in influencing harassment or bullying in schools. Any such information comes solely from the anecdotes that actually do something to implicate Trump in cases of harassment or bullying. There are comments where students harassed other students by telling them they would be deported when Trump was president, but there are merely tens of such implicating cases in the data, not the hundreds implied by the “one-in-five” statistic.

If we assume that PolitiFact looked at the list of comments, we have to conclude PolitiFact is guilty of a mind-boggling lack of common sense. We charitably opt instead for the conclusion that PolitiFact did not bother to look at the list of responses. While not damning of the PolitiFact team’s common sense, it reflects mind-boggling carelessness, perhaps influenced by ideological bias.

We wrote to the writer/editor team on Aug. 28, 2016 to point out this crater in their story. We have yet to receive any response or acknowledgment, and we do not yet see any change in PolitiFact’s source list or its use of the “one in five” figure.

Falsely describing survey responses mentioning Trump as “unsolicited”

We’ve reviewed evidence that the SPLC survey respondents were asked five open-ended questions. Asking a question solicits a response. We have puzzled over how PolitiFact can justify calling solicited answers “unsolicited”:

Many of these teachers, unsolicited, cited Trump’s campaign rhetoric and the accompanying discourse as the likely reason for this behavior.

The most charitable explanation we can come up with on PolitiFact’s behalf? Mentions of Trump are unsolicited so long as the questions did not specifically ask for mentions of Trump. Or maybe if the questions did not ask respondents to identify Trump as the cause of increasing harassment.

That charitable explanation, thin as it already is, crumbles thoroughly given the context. Earlier in our article, we linked to an example of the way Teaching Tolerance framed its advertisement for survey respondents. Here’s the line Teaching Tolerance used on Twitter:

Has the negative nature of the 2016 election impacted your school? Tell us.

Are prospective respondents who follow Teaching Tolerance on Twitter wondering what is meant by the “negative nature of the 2016 election”? That’s doubtful. Trump’s bull-in-a-china-shop campaign style has been the big political story for months and months. The SPLC survey was a fishing expedition looking to reel in some social science dirt on Trump. PolitiFact declined to even mention the organization’s liberal tilt:

Clinton’s source is an April report out of the Southern Poverty Law Center, a civil rights and antidiscrimination advocacy group.

Lee Stranahan gives us a convincing demonstration of the SPLC’s leftward lean.

The Teaching Tolerance survey solicited responses to its questions in the context of a presidential election featured a Republican known for controversial rhetoric. The SPLC report may have had its title, the “Trump Effect,” before it received its first survey response. Time magazine, for example, published an article on Trump’s “racial demagoguery” with the tag “Trump Effect” back in 2015.

Of course the SPLC was soliciting responses mentioning Trump, even if the request was not specific.

Failing to criticize Clinton over the failure to show Trump as a cause of increased bullying or harassment

PolitiFact noted from the first line of its fact check that Clinton was saying the Trump campaign was responsible for the problems Clinton described:

Americans should be concerned about the effect of Donald Trump’s campaign on kids, said Hillary Clinton in an Aug. 25 speech decrying what she sees as her opponent’s campaign of “prejudice and paranoia.”

After setting the context of Clinton’s claim, PolitiFact charted the course for its fact check, with Trump’s role in causation apparently one of the planned destinations:

We wondered if Clinton is right teachers are reporting an increase in bullying and harassment and if so, what does it have to do with Trump?

Other than one in five submitted comments mentioning Trump, which we have noted carries no significance, apparently nothing. PolitiFact does not otherwise take up the issue of Trump as a cause.

Based on the non-evidence that Trump was responsible, Clinton skates away totally unscathed.

Trying to support its findings with vague expert testimony

While PolitiFact was admitting problems with the SPLC survey, it continued to gamely try using the survey as legitimate evidence. First, it summarized bullying expert Sheri Bauman to suggest the survey carries some sort of value:

Bauman said the Southern Poverty Law Center’s report should not be dismissed, as its data show important recurring themes.

Do the data show important recurring themes that somehow support Clinton’s point? Color us skeptical.

In its summary, PolitiFact gave us a sketchy group endorsement of the SPLC report:

(E)xperts in bullying told us the Southern Poverty Law Center’s survey and their sense of current trends in schools supports Clinton’s point.

The experts counted the SPLC report as evidence? Again color us skeptical. We wrote off the author of the report for reasons we will soon discuss, but we emailed the other three to find out if PolitiFact was fudging the facts. The responses from Dorothy Espelage and Jan Urbanski are less clear than we had hoped. Both stop short of clearly affirming or denying that they count the report in Clinton’s favor, though Urbanski’s reply says she carries no sense that current trends support Clinton.

We continue to wait on a reply from Sheri Bauman.

Our beef with PolitiFact is summed up in our email message to the three experts. Expertise is properly a breadth of knowledge on a particular topic that produces a high-quality judgment based on that body of knowledge. Fact checkers should draw out enough of the justification backing the expert judgment to help readers accept the judgment based on more than just trust. PolitiFact gives us so little from its experts that we cannot verify what it says about their opinions.

Failing to significantly reflect the problems acknowledged in the fact check with its “Truth-O-Meter” rating

Even overlooking all of its other mistakes, it strains credulity for PolitiFact to give Clinton’s claim a “Mostly True” after the article shredded the reliability of the SPLC study. PolitiFact acknowledged the survey was not useful for judging any kind of trend. PolitiFact produced no other supporting information aside from its dubious claim of support from its experts.

Just as importantly, PolitiFact identified Clinton’s claim as the type of generalization the survey could not support:

(I)t would be inaccurate to extrapolate from the survey that bullying and harassment are generally on the rise across the country. Rather, it is more a collection of teachers’ anecdotal experiences.

Without providing a shred of solid support for Clinton’s claim, PolitiFact gifted Clinton with its “Mostly True” rating. A collection of anecdotes cannot support Clinton’s claim about a general rise of harassment and bullying in our schools. Adding in the alleged targeting of minority groups makes the claim even harder to support. Yet PolitiFact somehow overlooks all that for Clinton’s sake.

Following a different set of standards than PolitiFact followed for a parallel claim from Donald Trump

On June 9, 2016, PolitiFact published a fact check of Republican presidential candidate Donald Trump’s claim that crime is rising. PolitiFact could not find recent data, so it rated Trump according to old data. The older data did show violent crime rising in some areas, but since experts said it could be a blip instead of an upward trend, PolitiFact rated Trump’s claim “Pants on Fire”—its lowest possible rating.

Why didn’t PolitiFact use the old and available data to rate Clinton? Why didn’t PolitiFact lower Clinton’s rating when the facts failed to show an upward trend in school harassment and bullying?

An upcoming article at PolitiFact Bias will delve more deeply into this comparison.

Research notes: Establishing Trump as a cause?

Is it possible that Trump’s campaign rhetoric is causing an increase in harassment and bullying in our schools? Sure, it’s possible. But we have no firm data to support the idea. As noted, we received responses from two of the experts PolitiFact cited. Both shared comments that cast doubt on the ability of social science to show Donald Trump served a significant role in causing increased harassment or bullying.

Dorothy Espelage:

It appears as if folks are trying to make causal claims linking Trump’s commentary to the ways in which children treat one another, and even in the perfect world of longitudinal surveys, this is a challenge or even impossible—too many confounds. Unfortunately, the NCES trend data on bullying has measurement issues etc., but that data might shed some light on the trends of bullying after the election, but any increase could due to many things, like schools not addressing bullying, schools implementing non-evidence-based practices, etc. Then you add to the mix of complexity that Clinton’s campaign is running the ad with kids watching him over and over again.

Jan Urbanski:

(A)lthough current events certainly can have an impact in a school environment, there are many factors that could influence the rate of bullying in a school. So even when trend data for this year is available, to state any change was caused by political rhetoric is oversimplifying a complex issue.

Summary

Through its series of errors, PolitiFact passes off a handful of unidentified anecdotes as reasonable evidence to conclude that harassment and bullying of certain minority groups is on the rise, caused by Donald Trump’s campaign rhetoric.

PolitiFact pulls off the type of deception any underhanded campaign manager would like to create.

PolitiFact produced no good evidence harassment or bullying are on the rise in our schools. PolitiFact produced no good evidence any minority groups are increasingly targeted as victims of harassment in our schools. PolitiFact produced no good evidence a “Trump Effect” serves as the cause of any of these other things for which there is no good evidence.

PolitiFact produced the opposite impression across the board.

That’s bad.