Last year, the Cleveland Plain Dealer stopped publishing stories under the PolitiFact Ohio banner. We read with interest the criticisms of PolitiFact coming from Plain Dealer staffers.

On June 7, 2014 Plain Dealer reader representative Ted Diadiun lauded the start of the paper’s Truth in Numbers fact check effort. He told how the Truth in Numbers system improved on PolitiFact’s “Truth-O-Meter.”

(U)nlike the previous truth-squad features here – and in most other news operations – Truth in Numbers will do its job without the distracting and unnecessary baggage of any cutesy little dials or meters, drawings of noses or smiley faces.

PolitiFact might argue the necessity of its cutesy little meter. There’s little doubt that its trademarked “Truth-O-Meter” contributes to the popularity of its fact checks. But we agree with Diadiun that reliance on a linear rating tends to distort the facts instead of making them more clear.

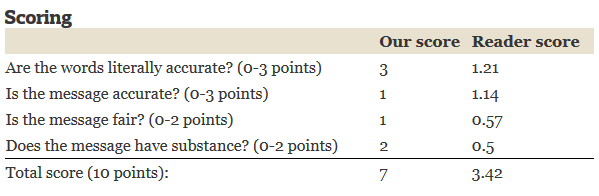

To remedy the problem, the Truth in Numbers Project makes four different ratings on political claims. The rating ends up with an appearance like this:

The Truth in Numbers system resembles one of the systems I considered when planning Zebra Fact Check. My proposed system would have rated political statements in three different ways but without combining the scores. Note that Truth in Numbers ends up providing a cumulative total score of 7 for the rating in the example.

We applaud the Plain Dealer for improving on the “Truth-O-Meter.” But the new system provides several avenues for bias to move the ratings.

- The category descriptions are ambiguous. What does it mean when literal accuracy gets a “2” on a scale of 0-3? How does one objectively rate fairness? What counts as “substance”?

- The total score misleads as much as a cutesy graphic. If cutesy graphics pigeonhole nuance then so does a total score as the sum of four rating categories. How many different ways can a political ad receive a “7” rating? Is that a good thing?

- A panel of three editors decides the final ruling. Like PolitiFact, the Truth in Numbers Project convenes a panel of editors to rule on political statements. Getting different views of the problem counts as a good. The problem comes from the anonymity of the process, which institutionalizes any existing newsroom bias.

- Ratings will apply to compound political claims. Diadiun says the Truth in Numbers Project will not try to narrow its rulings to single claims of fact. Every added truth-claim in a political ad dilutes the accuracy of an already ambiguous system.

The Truth in Numbers Project also brings an element of crowdsourcing to its process. Note in the above example a “Reader score” appears next to the Plain Dealer’s findings. Perhaps that’s just a curiosity, like PolitiFact’s “Lie of the Year” reader’s poll officially having nothing to do with the editors’ pick for Lie of the Year. Diadiun’s article suggests, however, that reader comments help direct the research. Whether that helps or hurts the project depends on how the Plain Dealer’s journalists filter and apply the comments.

The Truth in Numbers Project also brings an element of crowdsourcing to its process. Note in the above example a “Reader score” appears next to the Plain Dealer’s findings. Perhaps that’s just a curiosity, like PolitiFact’s “Lie of the Year” reader’s poll officially having nothing to do with the editors’ pick for Lie of the Year. Diadiun’s article suggests, however, that reader comments help direct the research. Whether that helps or hurts the project depends on how the Plain Dealer’s journalists filter and apply the comments.

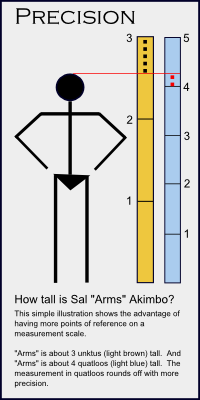

A Matter of Precision?

There’s one comment from Diadiun I just can’t let pass (bold emphasis added): “A panel of three editors reviews the reporting and determines the score. But the precision of the ratings makes it far more an objective than subjective process.”

As pointed out above, the more claims a rating covers the less precise the rating. Suppose an ad contains five claims of fact. That ad is much less likely to receive either a “0” or a “3” on literal accuracy than a single claim of fact. The ratings are done on short scales, 0-3 or 0-2, that strongly encourage rounding. So the total score will tend to represent the sum of four rounded-off numbers.

Between the commitment to slap one rating on sets of statements and combining short-scale ratings into one number, Diadiun’s statement is preposterous. The Truth in Numbers system welcomes imprecision. Watch for Truth in Numbers scores to gravitate strongly toward the center of the scale.

The Cleveland Plain Dealer deserves credit for acting to improve its fact-checking model. While the new system does improve on PolitiFact’s system in some minor ways, it continues to allow too many ways for bias to contaminate its findings. The rating scale, contrary to Diadiun’s expectation, almost guarantees imprecision through insensitive rating scales and murky descriptions of the rating categories.

The problems number too many for Truth in Numbers to improve dramatically on the PolitiFact model.